This week saw the launch of Google Bard, which has had a somewhat rocky start.

One user was informed by the AI chatbot that it had been trained using data from Gmail and other sources.

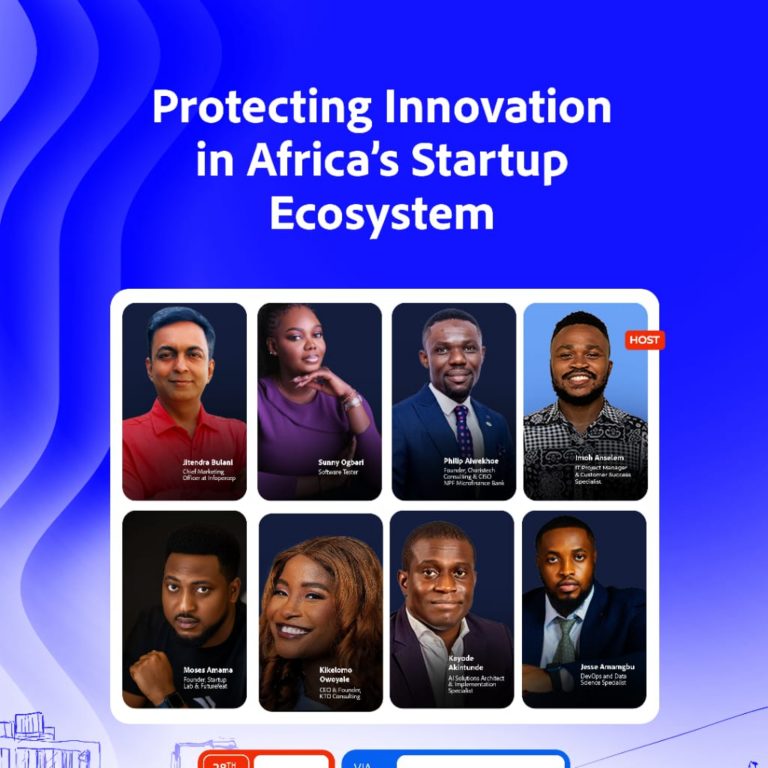

TechEconomy understands that privacy involves the control of personal information is central to many of the social concerns raised by new information technologies.

It would be a complete privacy violation if Bard was actually trained using datasets from Gmail which has millions of users.

However, Google later claimed this was untrue, saying that Bard is a “early experiment” that “will make mistakes.”

“Bard is an early experiment based on Large Language Models and will make mistakes. It is not trained on Gmail data,” the company said in a tweet.

In a another response that has since been deleted, Google also said, “No private data will be used during Barbs [sic] training process.”

In Bard’s initial response to Crawford, the chatbot said it was also trained using “datasets of text and code from the web, such as Wikipedia, GitHub, and Stack Overflow,” as well as data from companies that “partnered with Google to provide data for Bard’s training.”

Google CEO Sundar Pichai has instructed employees to anticipate errors as people begin using Bard.

“As more people start to use Bard and test its capabilities, they’ll surprise us. Things will go wrong,” he wrote in an email to staff on Tuesday, published by CNBC.

Comments 0