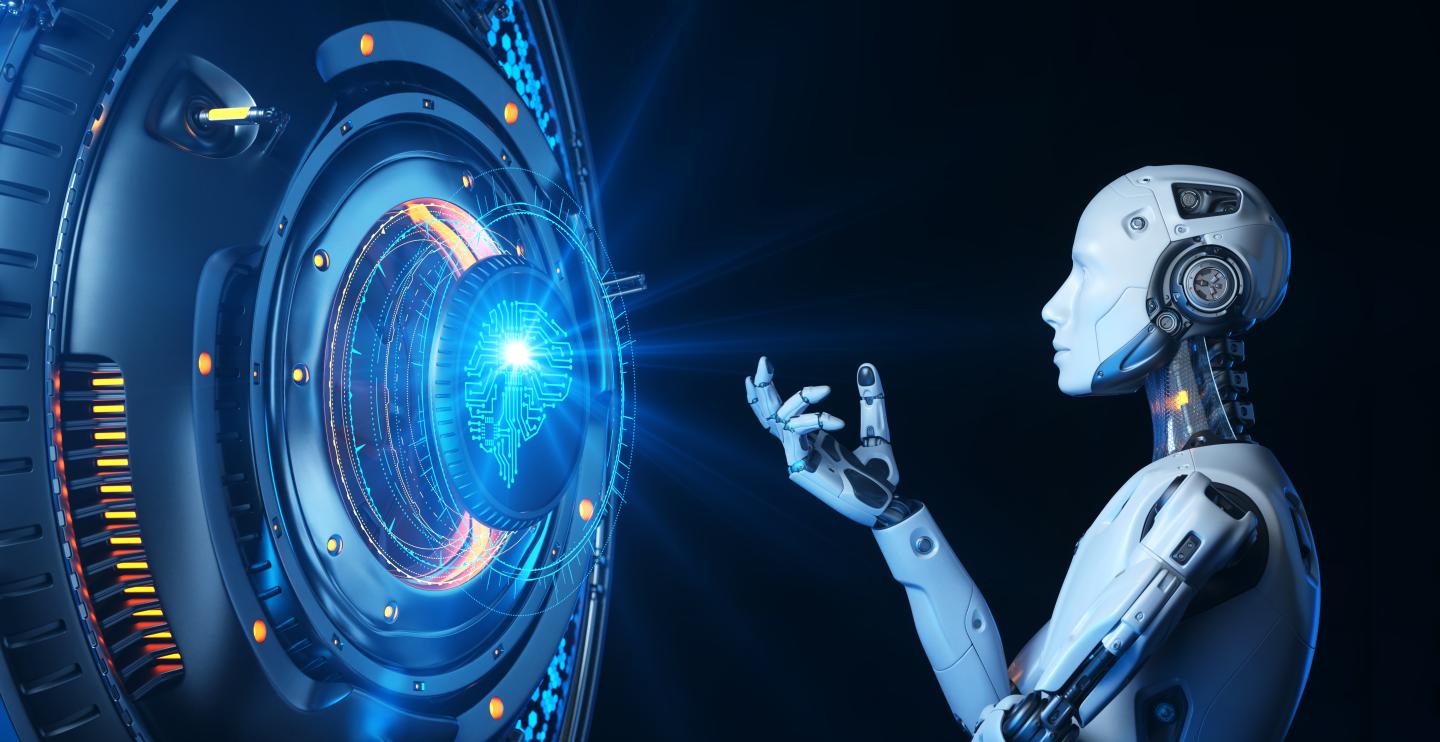

In 2018 at the World Economic Forum in Davos, Google CEO Sundar Pichai had something to say: “AI is probably the most important thing humanity has ever worked on. I think of it as something more profound than electricity or fire.”

A healthy amount of skepticism was expressed in response to Pichai’s statement. But almost five years later, it seems even more prophetic

Artificial Intelligence is currently the most widely discussed subject matter in the technology industry. AI has limitless potential and capacity that ordinarily should not be something to worry about, however, in reality, it is the opposite.

Experts in the tech space are beginning to worry about what the future holds for humans should this technology continue to showcase what it can do. There are fears.

AI Technology has advanced rapidly and gained popularity in recent years. Before now, there have been lots of AI tools that could assist solve human tasks, but the recent one which is the ChatGPT raised some eyebrows.

ChatGPT-3 was launched as a prototype on November 30, 2022. It garnered attention for its detailed responses and articulate answers across many domains of knowledge. Its uneven factual accuracy, however, has been identified as a significant drawback.

According to Open AI, Chat GPT-4 can process up to 25,000 words at once, which is 8x more than Chat GPT-3 could handle.

Just last week, more than 1,000 tech leaders sign a petition calling for a pause of ‘giant AI experiments’ in response to the release of GPT-4.

“AI systems with human-competitive intelligence can pose profound risks to society and humanity,” said the open letter titled “Pause Giant AI Experiments”.

“Powerful AI systems should be developed only once we are confident that their effects will be positive and their risks will be manageable,” it said.

Former Google CEO Eric Schmidt said recently in an interview monitored by TechEconomy with ABC that there are many advantages to AI technology, which has been expanding rapidly, but the industry has to ensure the technology “doesn’t harm but just helps.”

According to him, those in the AI industry have to ‘make sure the technology ‘doesn’t harm but just helps’, however, added that the technology has promise, but there are concerns.

He said the industry has to work to find “guardrails” to prevent negative impacts on democracy.

“Well, imagine a world where you have an AI doctor that makes everyone healthier in the whole world; imagine a world where you have an AI tutor that increases the educational capability of everyone in every language globally.

“These are remarkable. And these technologies, which are generally known as large language models, are going to do this.”

He continued: “But, at the same time, we face extraordinary new challenges from these things, whether it’s the deep fakes that you’ve discussed, or what happens when people fall in love with their AI tutor?”

Over the past year, as new applications have been released, such as ChatGPT, a bot that can weave sentences together in response to commands thanks to a lot of data and algorithms, AI technology has come under more and more criticism.

Despite widespread praise for the technology’s capabilities, several business executives and academics have called for a technological stop due to worries about the spread of false information, the automation of human labor, and the dangers of deep fakes.

Schmidt said he’s concerned about how AI can be used in “biology, or in cyberattacks, or in that sort of thing, and especially in manipulating the way the body politic works, and in particular how democracies work.”

Schmidt noted that technology can have a disadvantageous impact on democracy, particularly since people are taught to believe what they see and hear, and AI technology can produce very realistic-looking images that can cause misinformation to spread rapidly.

The tech leader said the entire industry needs to come together to figure out appropriate “guardrails” because the technology is only going to get bigger.

“Everyone’s focused on the bias, which is certainly a problem, and it’s being worked on. But the real problem is that when these systems are used to manipulate people’s day-to-day lives, literally the way they think, what they choose, and so forth, it affects how democracies work,” Schmidt said.