Kaspersky is exploring how the appearance of ChatGPT in the hands of the general public could change the established rules of the cybersecurity world.

The move comes a few months after OpenAI released ChatGPT3 – one of the most powerful AI models to date. ChatGPT-3 can explain complex scientific concepts better than many teachers, write music and generate almost any text based on the user’s request.

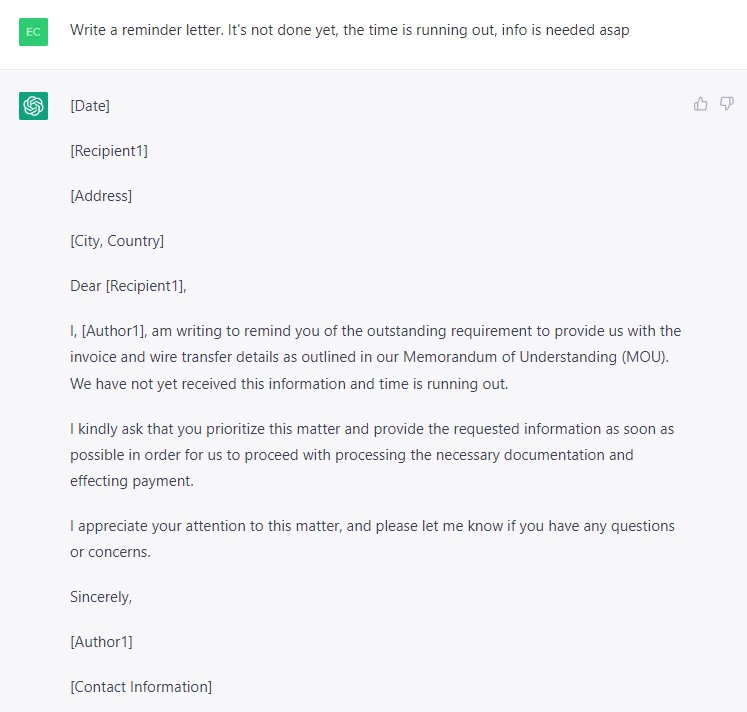

ChatGPT-3 is primarily an AI language model that creates convincing texts which are difficult to distinguish from those written by humans. Hence, cybercriminals are already trying to apply this technology to spear-phishing attacks.

Previously, the main hurdle stopping them from mass spear-phishing campaigns was that it is quite expensive to write each targeted email.

ALSO READ: ChatGPT; Revolutionary Chatbot … By Oluseyi Akindeinde

ChatGPT is set to drastically alter the balance of power, because it might allow attackers to generate persuasive and personalised phishing e-mails on an industrial scale. It can even stylise correspondence, creating convincing fake e-mails seemingly from one employee to another. Unfortunately, this means that the number of successful phishing attacks may grow.

Many users have already found that ChatGPT is capable of generating code, but unfortunately this includes the malicious type. Creating a simple infostealer will be possible without having any programming skills at all.

However, straight-arrow users have nothing to fear. If code written by a bot is actually used, security solutions will detect and neutralise it as quickly as it does with all past malware created by humans. While some analysts voice concern that ChatGPT can even create unique malware for each particular victim, these samples would still exhibit malicious behaviour that most probably will be noticed by a security solution.

What’s more, the bot-written malware is likely to contain subtle errors and logical flaws which means that full automation of malware coding is yet to achieve.

Although the tool may be useful to attackers, defenders can also benefit from it. For instance, ChatGPT is already capable of quickly explaining what a particular piece of code does.

It comes into its own in SOC conditions, where constantly overworked analysts have to devote a minimum amount of time to each incident, so any tool to speed up the process is welcome.

In the future, users will probably see numerous specialised products: a reverse-engineering model to understand code better, a CTF-solving model, a vulnerability research model, and more.

“While ChatGPT doesn’t do anything outright malicious, it might help attackers in a variety of scenarios, for example, writing convincing targeted phishing emails. However, at the moment, ChatGPT definitely isn’t capable of becoming some sort of autonomous hacking AI.

The malicious code that the neural network generates will not necessarily be working at all and would still require a skilled specialist to improve and deploy it. Though ChatGPT doesn’t have an immediate impact on industry and doesn’t change the cybersecurity game, the next generations of AI probably will.

In the next few years, we may see how large language models, both trained on natural language and programming code, are adapted for specialised use-cases in cybersecurity.

These changes can affect a wide range of cybersecurity activities, from threat-hunting to incident response. Therefore, cybersecurity companies will want to explore the possibilities that new tools will give, while also being aware of how this tech might aid cybercriminals,” comments Vladislav Tushkanov, a security expert at Kaspersky.